Most people use ChatGPT on a regular basis to automate a variety of tasks. If you’ve used ChatGPT for any amount of time, you’ve probably noticed that it occasionally gives incorrect information and has little to no context for some specialized subjects. This raises the issue of how we can use chatGPT to close the gap and give ChatGPT access to more customized data.

As chatbots and AI-powered conversational agents continue to gain traction in various industries, the need for customized solutions that fit specific use cases becomes more apparent.

With the advent of powerful language models like OpenAI’s GPT series, developing a bespoke chatbot with a tailored knowledge base is now possible.

- Understand Your Chatbot’s Purpose

Understanding the main objective of your chatbot is essential before getting into the technical details of creating your custom ChatGPT.

Decide on the target market, the usage context for the bot, and the kind of knowledge foundation you want to build. This knowledge will direct the creation process and guarantee that your robot satisfies your particular requirements.

- Feeding Data via Prompt Engineering

Let’s look at how we could expand ChatGPT manually and what the problems are before moving on to how we can extend ChatGPT. The standard method for expanding ChatGPT is through prompt engineering.

Since ChatGPT is context aware, doing this is very easy. In order to communicate with ChatGPT, we must first append the original document’s text before the questions.

- Leverage LlamaIndex (GPT Index)

LlamaIndex, also known as the GPT Index, is a project that provides a central interface to connect your LLMs with external data.

By providing indexes over your structured and unstructured data, it allows you to use LLMs. By eliminating common boilerplate and pain spots and preserving context in an accessible manner for quick insertion, these indices support in-context learning.

Before we start, make sure you have access to the following:

- Instal Python ≥ 3.7 on your system

- An OpenAI API key, Use your Gmail account to sign-on.

How It Works

- Create a document data index with LlamaIndex.

- Use natural language to search the index.

- The pertinent pieces will be retrieved by LlamaIndex and passed to the GPT prompt. LlamaIndex will transform your original document data into a query-friendly vectorized index. It will utilize this index to find the most pertinent sections based on how closely the query and data match. The information will then be loaded into the prompt, which will be sent to GPT so that GPT has the background necessary to respond to your question.

- After that, you may ask ChatGPT, given the feed in context.

Install the dependency libraries first

pip install openai pip install llama-index pip install google-auth-oauthlib

Next, Import the libraries in Python and set up your OpenAI API key in a new main.py file.

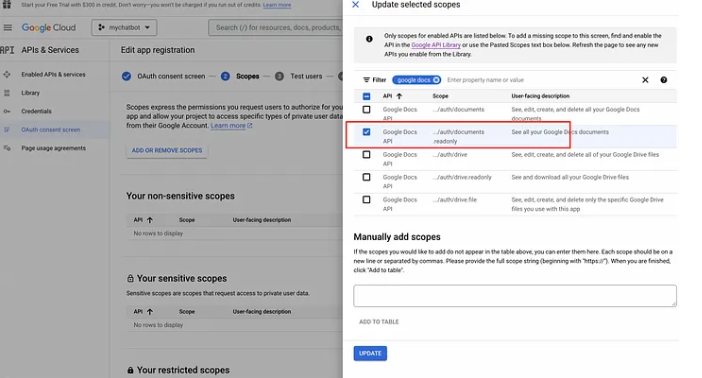

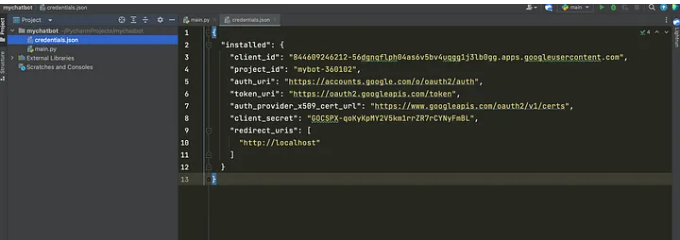

To enable the Google Docs API and fetch the credentials in the Google Console.

Once your credentials are set up, you can download the JSON file and store it in the root of your application

Go to your Google Docs, open up a few of them, and get the unique id that can be seen in your browser URL bar.

Your prompt will be accepted by LlamaIndex internally, which will then search the index for relevant chunks and send both your prompt and those chunks to the ChatGPT model. The previous steps show how to use LlamaIndex and GPT for question-answering in its most basic form. There is, however, a lot more you can do. You are only limited by your creativity when configuring LlamaIndex to utilize a different large language model (LLM), using a different type of index for various activities, or updating old indices with a new index programmatically.

Conclusion :

A ChatGPT chatbot that can infer information based on its own document sources can be created using LlamaIndex and ChatGPT. Even though ChatGPT and other LLM are quite effective, extending the LLM model offers a much more refined experience and makes it possible to create conversational-style chatbots that can be used to create real business use cases like customer support assistance or even spam classifiers. We can assess some of the ChatGPT models’ limitations since we can input real-time data after they have been trained for a certain period of time.

For more details contact info@vafion.com

Follow us on Social media : Twitter | Facebook | Instagram | Linkedin

Similar Posts:

- No similar blogs